The Practical Use of Earned Value

Earned Value reporting is a very powerful tool for keeping projects on track but it is rarely used in IT. What are the challenges and how can they be overcome?

It is easy to understand that Earned Value provides a concise and timely view of project progress, enabling early forecasting and resolution of cost and schedule issues.

When it comes to implementing, however, the devil is in the details. Capture and analysis of detailed information is required. Collecting and analyzing the data takes time and effort. As does understanding why the detail is needed.

Earned Value also requires a breakdown of schedule and budget that matches the setup of a timekeeping system where actual effort is recorded. Why invest this time and effort? Shouldn't the focus be on proper handling of the "soft issues" (i.e. the people side) since this is more important to project success than process and analysis?

Even if the details are taken care of and reports are being used to identify and correct performance issues, overall progress can be distorted by quality issues not presented in Earned Value reports. A software development project can have wonderful cost and schedule performance indices, but how do we know if the product will meet the customer's requirements? Often, conformance to requirements is not properly evaluated until the testing phase of the project. Discovery of major conformance issues at this late stage on a project that has been otherwise performing well against cost and schedule, calls into question the validity of all previous Earned Value reports.

Why Invest?

Earned Value is indispensable as a communication tool for handling the "soft issues." Project management is about managing stakeholder expectations and the management of stakeholder expectations relies critically on communication. Earned Value reports provide important communication content. They concisely and objectively indicate the cost and schedule status of the project, enabling focused discussion on the root causes of and alternative resolutions to performance issues. Objective facilitation of such discussions, to identify and justify actions that each stakeholder must own in order to meet expectations, becomes much more effective when Earned Value reporting is used to measure progress.

What about the time and effort involved in collecting, analyzing and reporting the details? Most software projects have a schedule and budget breakdown and many require team members to record their effort in some kind of timekeeping system. Often, however, the timekeeping system setup does not match the schedule and budget breakdown, so that it is very difficult, or impossible, to match actual effort against planned effort. This occurs when there is poor coordination between the administrators of the timekeeping system (often the Accounting department) and the project management team. In this situation, generating Earned Value reports based on the non-matching setup in the timekeeping system is not worth the cost. The list of projects and tasks in the timekeeping system must be changed to match the project schedule and budget breakdown. Earned Value can then be used to measure progress from the date the timekeeping system is changed. The resulting improved communications will outweigh the costs of changing the timekeeping setup.

If the timekeeping system has been setup to match the schedule and budget breakdown, capture and analysis of the detail data is straightforward. Team members must provide hours worked and percent completes for the tasks they are assigned. Expected (planned) percent completes are obtained from the project schedule. This data is entered, on a regular basis, to the timekeeping software. If the timekeeping software does not have an Earned Value module, one-time setup of a simple spreadsheet will be necessary. The spreadsheet calculates Earned Value (actual percent complete times budgeted hours), planned value (expected percent complete times budgeted hours) and performance indices (CPI and SPI).

Setting up the spreadsheet is a simple matter of applying the Earned Value formulas at the level of detail desired for progress tracking. Time and effort can be reduced significantly by selecting the highest level of detail. For example, in a multiple project environment where there are many small projects of two month or less duration, it is reasonable to present earned value statistics for each project. The benefits of Earned Value reporting will substantially exceed the cost of creating the spreadsheet.

The Missing Piece

When the performance indices (CPI and SPI) are graphically displayed over time, potential future issues, which can be resolved through immediate action, are clearly identified. This makes Earned Value a very powerful tool for forecasting and mitigating risks. It would be nice if we could rely on one report to provide a complete and accurate picture of project progress for all stakeholders. Here is an idea.

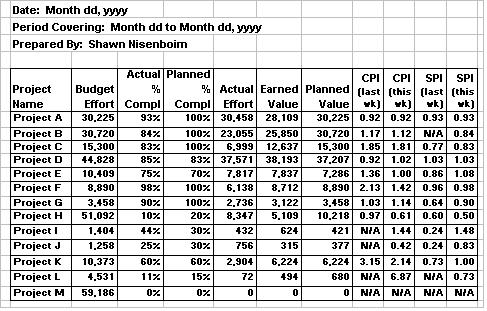

A typical earned value report might look like the following:

Typical earned value report - identifies problem projects by showing cost and schedule performance indices.

Let's say this report is circulated to the development team in advance of its weekly status review meeting to focus discussion on projects performing below expectations. From this report, you can immediately see that Project H has poor cost and schedule performance that is getting worse. This project requires immediate attention, and the cause of poor performance will likely be a key focus of the meeting. In all likelihood, a follow-up meeting to discuss alternative actions will be arranged to follow the status review.

Some of the other projects on the report look like they are doing fine and are unlikely to attract much attention. Project D, for example has favourable cost and schedule indices. What we don't know about Project D, however, is how well the developing product will conform to requirements when complete.

What if we introduced another column to the report, called "Quality Performance" (QPI) which is a measure of how well the product appears to be conforming to customer requirements. This measure could be as simple as a rating review provided informally by a peer or subject matter expert. A rating of .90, for example, would mean that, in the opinion of the reviewer(s), for the components of the developing product that are available for review, there is 90% conformance to requirements.

Note that in the case of Project D, availability of product components for review should correspond to a reported 85% complete status for the project. If, in the opinion of the reviewer(s), availability of reviewable components does not correspond, the quality performance rating will be lowered accordingly. The additional review time will reduce administration effort by enabling more efficient communications. It will also save time and cost during the testing phase of the project by reducing rework. These savings will more than offset the extra review time.

Finally, by multiplying the three performance statistics together (CPI times SPI times QPI), we would have a single number that provides a rating for the project's overall progress.

Using Earned Value to report progress on IT projects will improve communications and lead to earlier resolution of issues. Implementation requires a timekeeping system that is set up to be consistent with the schedule and budget planning processes. Collecting the data is straightforward and analyzing it can be easily automated with a simple spreadsheet. There is potential for a single report to provide a concise, accurate and complete picture of a project's progress by conducting periodic reviews of product conformance to requirements.

Written by Shawn Nisenboim of Indigo Technologies, this article was originally published in the Fall 2002 issue of Project Times Magazine. For more information about how you can painlessly implement Earned Value methods at your organization, e-mail us at sales@indigo1.com.

See What TimeTiger

Can Do For You!

Begin Your Free

30-Day Trial Today!